Monitor calibration might seem complex. Perhaps it is, but you’ll soon be comfy with it if you can grasp some of the basic principles. It’s just a question of breaking the subject down. In this article, we’ll look at six aspects of a seemingly dark art, and how to calibrate your monitor.

1) Luminance / Brightness Level

One thing to know about monitor luminance (or brightness, in simple terms) is that it’s typically the only genuine hardware adjustment you can make to an LCD monitor. You are basically altering the backlighting with a dimmer switch.

The above is only untrue if you select a luminance setting that is lower than your monitor can naturally reach, in which case a software adjustment comes into play. Ideally, you don’t want this, since it eats into the monitor’s gamut (the range of colors it produces) and leaves it open to problems such as banding.

Always use software that tells you how bright the monitor is and lets you adjust it interactively.

Software versus hardware

Software adjustments are the ones that go through the graphics processor, while hardware adjustments are those that bypass the GPU and address the monitor directly. The former may cause problems in some cases, which is useful to bear in mind. Expensive monitors tend to allow more in the way of hardware calibration, enabling a higher image quality.

What setting to use?

Monitor luminance is measured in candelas per square meter (cd/m2), sometimes referred to as “nits”. A new LCD monitor is usually far too bright (e.g. over 200 cd/m2). Aside from making screen-to-print matching hard, this reduces the monitor lifespan.

You need a calibration device to measure the luminance of your monitor and always return it to the same level, as the backlighting slowly degrades. The trouble with using onscreen monitor settings to do this (e.g. 50% brightness) is that their meaning changes over time.

X-rite i1Display Pro

The arbitrary setting

Although arbitrary, the 120 cd/m2 setting that most software defaults to is a fair place to start. Most monitors can reach that level using the OSD brightness control alone, without resorting to reducing RGB levels and gamut. The setting you use is not critical unless you are explicitly trying to match the screen to a print or print-viewing area.

Dictated by ambient light

Ideally, you should control the ambient lighting in your editing area so you’re free to set the luminance you want. The monitor should be the brightest object in your line of vision. If you’re forced to edit in a bright setting, luminance must be raised so that your eyes are able to see shadow detail in your images. Some calibrators will read ambient light and set parameters accordingly. In controlled situations, this feature is needless and even unhelpful.

The paper-matching method

Many printers set their monitor luminance very low. By this, I mean between 80-100 cd/m2. The idea is to hold a blank piece of printing paper up next to your screen and lower the luminance until it matches the paper, or just set a low level so that this is more likely.

Potential downsides include a degraded monitor image since not all monitors can achieve this low luminance level without ill effect. Still, you could try it. This is about finding what works for you and your gear.

Matching the print-viewing area

Another way printers set monitor luminance is to match it to the lighting of a dedicated print-viewing booth or area. Although the light in this area may differ to that of the final print destination, it’s useful to note that monitor calibration is never quite an exact science. As well, print display lighting is always adjustable in its intensity. Using this method, the monitor luminance might be as high as 140-150 cd/m2. This setting should be natively achievable by any monitor.

2) Color Temperature / White Point

Most calibration programs will default to a 6500K white point setting, which is a cool “daylight” white light. This is usually close to the native white point of the monitor, so it’s not a bad setting, but you needn’t accept the software defaults.

By Bhutajata (Own work) [CC BY-SA 4.0], via Wikimedia Commons

Gentle calibration – native white point

If you own a cheap consumer-level monitor or a laptop with low-bit color (that’s most laptops), it’s a good idea to choose a “native white point” setting. This is only typically available with more advanced calibration programs, including the open source program DisplayCAL.

When you choose a native white point or anything “native” in calibration, you are leaving the monitor untouched. Because this means there are no software adjustments being made, the display is less likely to suffer from issues such as banding.

Correlated color temperature

In Physics, a Kelvin color temperature is an exact color of light that is determined by the physical temperature of the black body light source. As you probably know, the greater the heat, the cooler or bluer the light becomes.

Monitors don’t work like this since their light source—LED or fluorescent—doesn’t come from heat. They use a “correlated color temperature” (CCT). One thing to know about correlated color temperature is that it’s not an exact color. It’s a range of colors. This ambiguity is not ideal when trying to match two or more screens.

By en:User:PAR (en:User:PAR) [Public domain], via Wikimedia Commons

This illustration, above, of the CIE 1931 color space plots Kelvin color temperatures along a curved path known as the “Planckian locus”. Correlated color temperatures are shown as the lines that cross the locus, so for instance, a 6000K CCT may sit anywhere along a green to a magenta axis. A genuine 6000K color temperature would rest directly on the Planckian locus at the point where the line crosses, so its color is always the same.

Though color temperatures might not mean the same thing from one monitor to the next, calibration software should be more precise. It’ll use x and y chromaticity coordinates (seen in the graph above) to precisely plot any color temperature. Thus, theoretically, you should be able to match the white point of two different monitors during calibration.

Even if you manage that, gamut differences are still likely to complicate things. It’s often easier to forget about matching screens and just use the better of them for editing.

Matching print output

Your chosen white point won’t always match the light under which you display or judge prints. For that reason, you might want to experiment with settings. Remember you’ll harm image quality if you bend the white point far from its native setting. In calibration, you’re often seeking a compromise and/or testing the boundaries of your monitor’s performance. Once you know these changes may cause problems, you can reverse them easily.

3) Gamma / Tonal Response Curve (TRC)

Digital images are always gamma-encoded after capture. In other words, they’re encoded in a way that corresponds to human eyesight and its non-linear perception of light. Our vision is sensitive to changes in dark tones and less so with bright tones. Although digital images are stored thus, they are too bright at this point to represent what we saw. They must be decoded or “corrected” by the monitor.

By I, the copyright holder of this work, hereby publish it under the following license: (Own work) [Public domain], via Wikimedia Commons

A digital camera has a linear perception of light, whereby twice as much light is twice as bright. Gamma encoding and correction alters the tonal range in line with the human vision, which is more sensitive to changes in shaded light than in highlights. By the way, the gradients in the above image are smooth. Any color or banding you see is caused by your monitor, and harsh calibration will make it worse.

This is where the monitor’s gamma setting (or tonal response curve) comes in. It corrects the gamma-encoded image so that it looks normal. The gamma setting needed to achieve this is 2.2, which is also the default gamma setting in calibration programs. However, this is another setting that you may stray from if your software allows it.

Gentle calibration – native gamma setting

Like the white point setting, the gamma setting is a software adjustment that might degrade the monitor image. If you calibrate with a native gamma setting, you are less likely to harm monitor performance. The only trade-off is that images outside of color-managed programs might look lighter or darker. However, inside color-managed programs, images will display normally.

4) The Look-Up Table (LUT)

Once you’ve dialed your settings into the calibration software, what happens to them next? They’re attached to the ICC profile (created after calibration) in the form of a “vcgt tag”. This then loads into the video card LUT (look-up table) on startup, at which point the screen changes in appearance.

Having said the above, if you’ve chosen only native calibration settings, you’ll see no change to your screen at startup. The Windows desktop may look different under a native gamma setting since it is not color aware. A Mac desktop will remain unchanged.

With expensive monitors, the LUT is often stored in the monitor itself (known as a hardware LUT), bypassing the GPU. One benefit of this is that you can create many calibration profiles and switch easily between them. This is not possible with most lower-end monitors.

5) Third-party calibration programs

High-end monitors come with software that allows all sorts of tricks, but most monitors and programs are less flexible. It’s worth noting, though, that some calibrators work with third-party programs, no matter what software they came with. Conversely, some tie you down to proprietary software, so this is worth checking when you buy a calibrator.

Ironically, one of the things more advanced programs let you do is nothing. In other words, they let you choose “native” calibration settings. Look at DisplayCAL or basICColor programs if you want more flexibility, but check for compatibility with your device first.

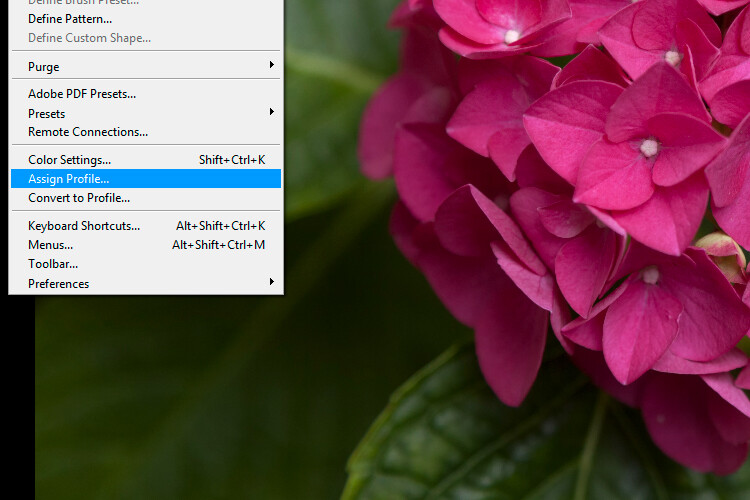

6) Calibration versus Profiling

The word “calibration” is an umbrella term that often refers to the process of calibrating and profiling a monitor. However, it’s useful to note that these are two separate actions. You calibrate a monitor to return it to a known state. Once it’s in that state, you then create a profile for the monitor that describes its current output. This allows it to communicate with other programs and devices and enables a color-managed workflow.

DisplayCAL info at the end of calibration and profiling. Gamut coverage is the proportion of a color space the monitor covers. Gamut volume includes coverage beyond that color space.

If you can’t afford a calibration device, it’s better to calibrate it using online tools than to do nothing at all. You’ll still need to get the luminance down from its factory level. Check things like black and white level on a website such as this.

You can’t create a proper profile for your monitor using software alone. Any software that claims to do this is using either a generic profile or the sRGB color space.

Finally

I hope this article has helped your understanding of monitor calibration. Ask any questions you like in the comments below and I’ll try to answer them.

The post Six Important Aspects of Monitor Calibration You Need to Know by Glenn Harper appeared first on Digital Photography School.

Since most browsers are now color-managed by default, you can get away with saving photos in the larger Adobe RGB color space for the web. You must embed the profile into the image file if you do this, otherwise, your photos will look desaturated to most people. Only a minority of your audience will benefit from the bigger color space, alas, but it could be worth trying among a group of keen photographers with wide-gamut monitors.

Since most browsers are now color-managed by default, you can get away with saving photos in the larger Adobe RGB color space for the web. You must embed the profile into the image file if you do this, otherwise, your photos will look desaturated to most people. Only a minority of your audience will benefit from the bigger color space, alas, but it could be worth trying among a group of keen photographers with wide-gamut monitors.

You must be logged in to post a comment.